SATO Wataru Laboratory

Direction of amygdala–neocortex interaction during dynamic facial expression processing

(Sato*, Kochiyama*, Uono, Yoshikawa, & Toichi (* equal contributors): Cereb Cortex)

Dynamic facial expressions of emotion strongly elicit multifaceted emotional, perceptual, cognitive, and motor responses.

Neuroimaging studies revealed that some subcortical (e.g., amygdala) and neocortical (e.g., superior temporal sulcus and inferior frontal gyrus) brain regions and their functional interaction were involved in processing dynamic facial expressions.

However, the direction of the functional interaction between the amygdala and the neocortex remains unknown.

To investigate this issue, we re-analyzed functional magnetic resonance imaging (fMRI) data from 2 studies and magnetoencephalography (MEG) data from 1 study.

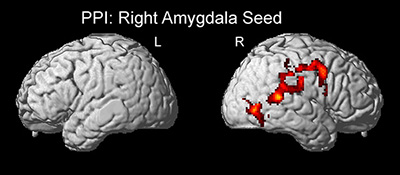

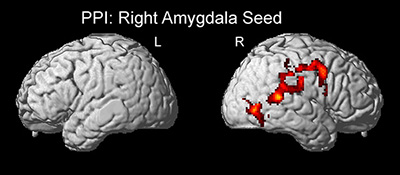

First, a psychophysiological interaction (PPI) analysis of the fMRI data confirmed the functional interaction between the amygdala and neocortical regions.

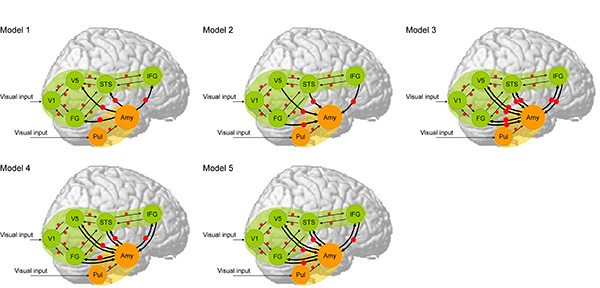

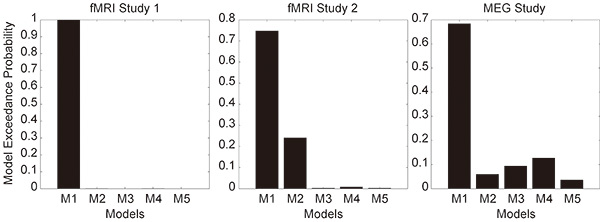

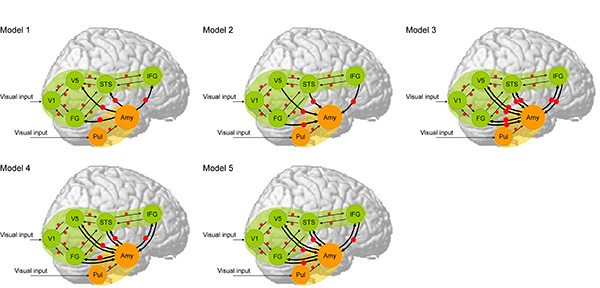

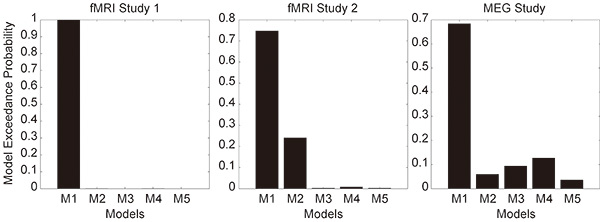

Then, dynamic causal modeling analysis was used to compare models with forward, backward, or bi-directional effective connectivity between the amygdala and neocortical networks in the fMRI and MEG data.

The results consistently supported the model of effective connectivity from the amygdala to the neocortex.

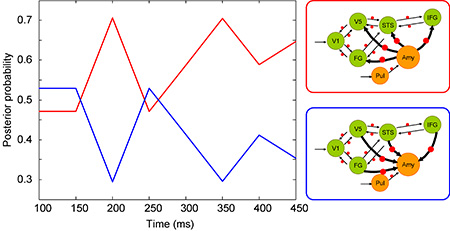

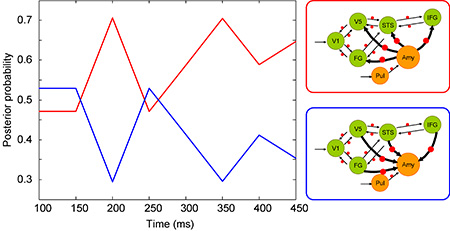

Further increasing time-window analysis of the MEG demonstrated that this model was valid after 200 ms from the stimulus onset.

These data suggest that emotional processing in the amygdala rapidly modulates some neocortical processing, such as perception, recognition, and motor mimicry, when observing dynamic facial expressions of emotion.

Return to

Recent Research.

Return to

Main Menu.