SATO Wataru Laboratory

The widespread action observation/execution matching system for facial expression processing

(Sato*, Kochiyama*, & Yoshikawa (* equal contributors): Hum Brain Mapp)

Observing and understanding others' emotional facial expressions, possibly through motor synchronization, plays a primary role in face-to-face communication.

To understand the underlying neural mechanisms, previous fMRI studies investigated brain regions that are involved in both the observation/execution of emotional facial expressions and found that the neocortical motor regions constituting the action observation/execution matching system or mirror neuron system were active.

However, it remains unclear (1) whether other brain regions in the limbic, cerebellum, and brainstem regions could be also involved in the observation/execution matching system for processing facial expressions, and (2) if so, whether these regions could constitute a functional network.

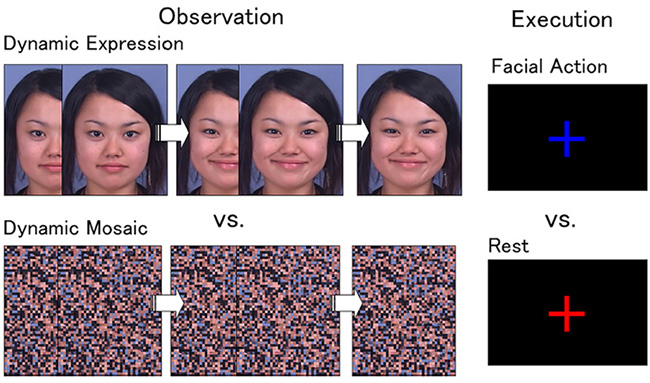

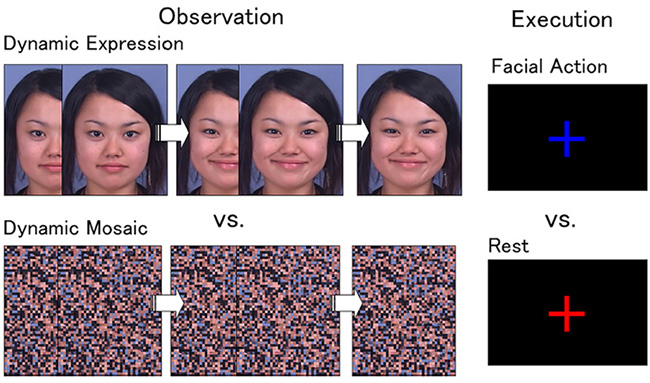

To investigate these issues, we performed fMRI while participants observed dynamic facial expressions of anger and happiness and while they executed facial muscle activity associated with angry and happy facial expressions.

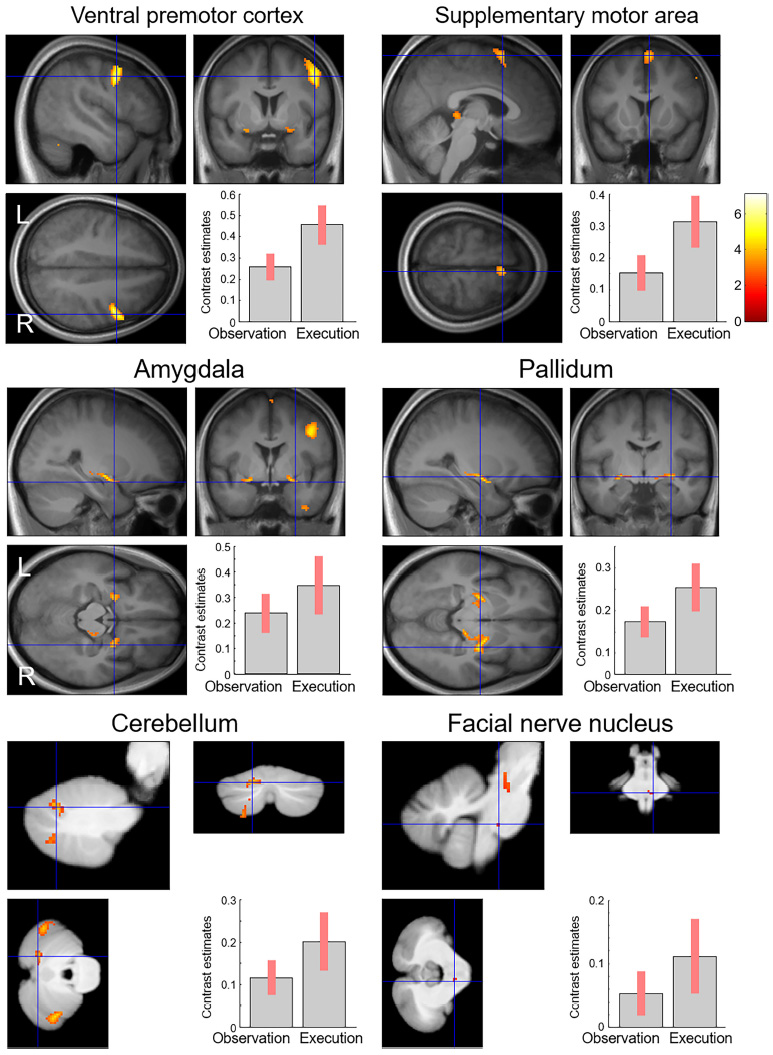

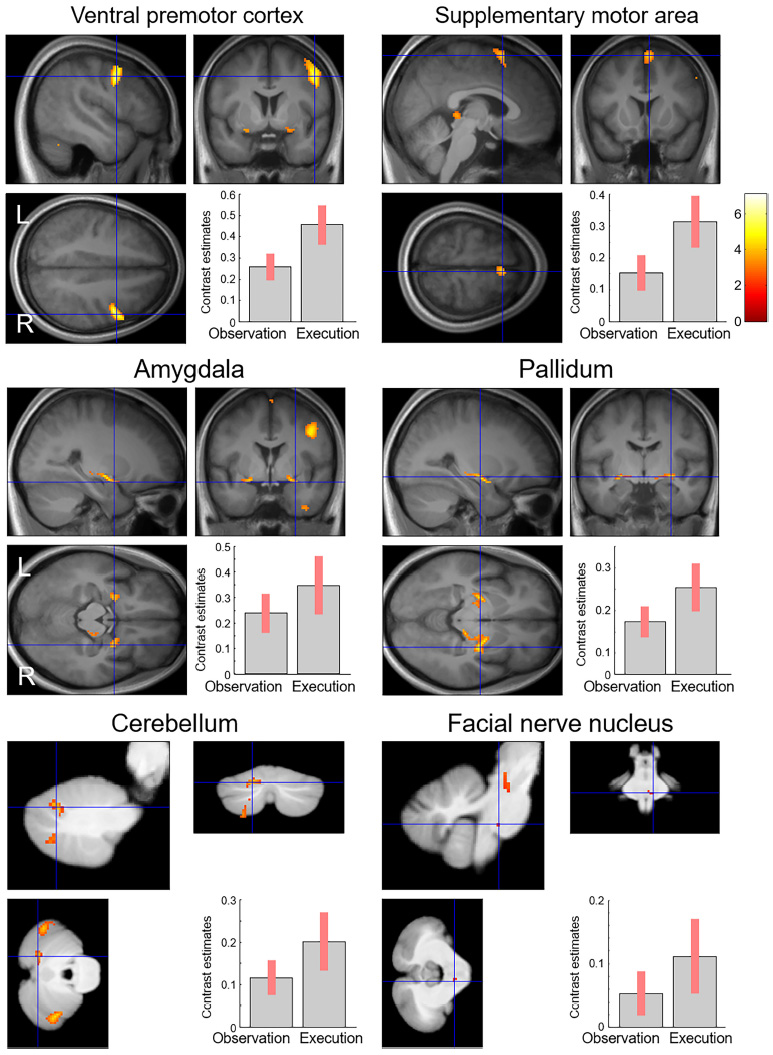

Conjunction analyses revealed that, in addition to neocortical regions (i.e., the right ventral premotor cortex and right supplementary motor area), bilateral amygdala, right basal ganglia, bilateral cerebellum, and right facial nerve nucleus were activated during both the observation/execution tasks.

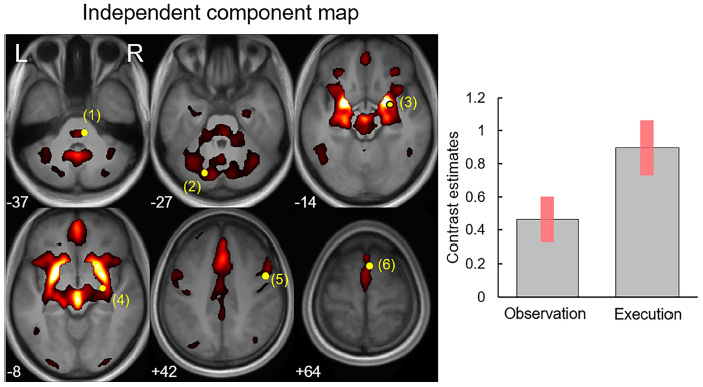

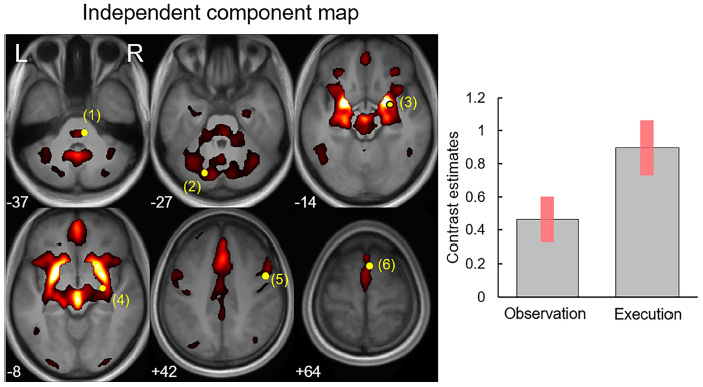

Group independent component analysis revealed that a functional network component involving the aforementioned regions were activated during both observation/execution tasks.

The data suggest that the motor synchronization of emotional facial expressions involves a widespread observation/execution matching network encompassing the neocortex, limbic system, basal ganglia, cerebellum, and brainstem.

Return to

Recent Research.

Return to

Main Menu.